Visualizations in 3D

The Ouster Python SDK provides two visualization utilities for user convenience. These are introduced briefly below.

Visualization with Ouster’s SDK CLI ouster-cli

Ouster’s OpenGL-based visualizer allows for easy visualization from pcaps and sensors on all platforms the Ouster SDK supports.

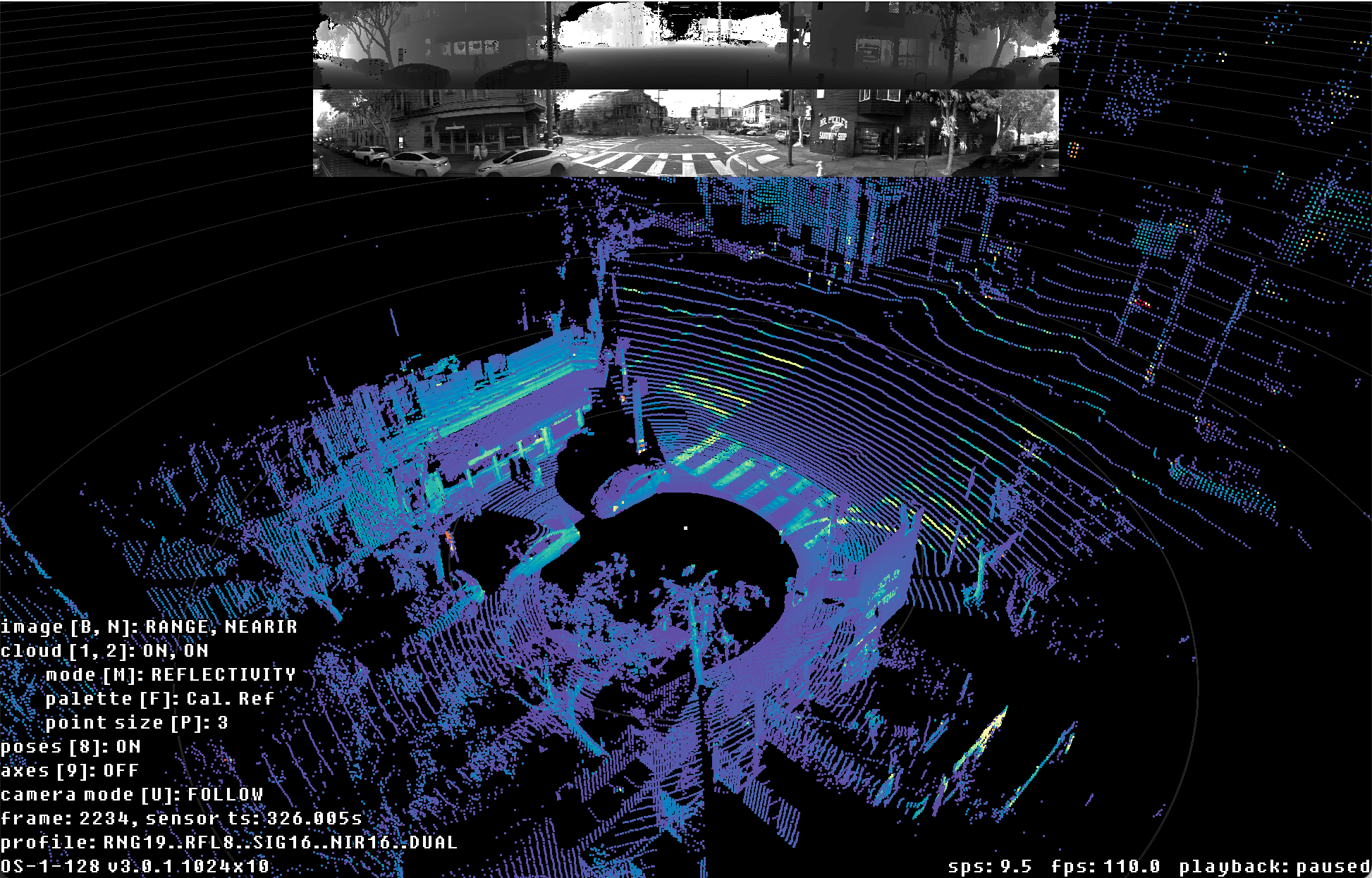

The default Ouster SDK CLI ouster-cli source <sensor | pcap | osf> viz visualizer view includes

two 2D range images atop which can be cycled through the available fields, and a 3D point cloud on

the bottom. For dual returns sensors, both returns are displayed by default.

Ouster SDK CLI ouster-cli source <sensor | pcap | osf> viz visualization of OS1 128 Rev 7 sample data

The visualizer can be controlled with mouse and keyboard:

Keyboard Controls

- Camera*

Key

What it does

shiftCamera Translation with mouse drag

wCamera pitch down

sCamera pitch up

aCamera yaw right

dCamera yaw left

qCamera roll left

eCamera roll right

shift+rReset camera

ctrl+rSet camera to the birds-eye view

shift+1Top down view

shift+2Front facing view

shift+3Left facing view

uToggle camera mode FOLLOW/FIXED

= / -Dolly in/out

0Toggle orthographic camera

- Playback

Key

What it does

spaceToggle pause

. / ,Step one frame forward/back

ctrl + . / ,Step 10 frames forward/back

> / <Increase/decrease playback rate (during replay)

- 2D View

Key

What it does

b / BCycle top 2D image

n / NCycle bottom 2D image

i / shift+iIncrease/decrease size of displayed 2D images

- 3D View

Key

What it does

p / PIncrease/decrease point size

m / MCycle point cloud coloring mode

f / FCycle point cloud color palette

ctrl + [N]Enable/disable the Nth sensor cloud where N is 1 to 9

1Toggle first return point cloud visibility

2Toggle second return point cloud visibility

6Toggle scans accumulation view mode (ACCUM)

7Toggle overall map view mode (MAP)

8Toggle poses/trajectory view mode (TRACK)

2Toggle second return point cloud visibility

9Show axes

' / shift+'Increase/decrease spacing in range markers

ctrl + 'Increase thickness of range markers

cCycle current highlight mode

j / JIncrease/decrease point size of accumulated clouds or map

k / KCycle point cloud coloring mode of accumulated clouds or map

g / GCycle point cloud color palette of accumulated clouds or map

- Other

Key

What it does

oToggle on-screen display

?Print keys to standard out

shift+zSave a screenshot of the current view

shift+xToggle a continuous saving of screenshots

v / shift+vCycle screenshot resolution factor

escExit

To run the visualizer with a sensor:

$ ouster-cli source $SENSOR_HOSTNAME viz

This will auto-configure the udp destination of the sensor while leaving the lidar port as previously set on the sensor.

To run the visualizer with a pcap:

$ ouster-cli source $SAMPLE_DATA_PCAP_PATH [--meta $SAMPLE_DATA_JSON_PATH] viz

Visualization with Ouster’s viz.PointViz

Please refer to PointViz Tutorial & API Usage for details on extending and customizing

viz.PointViz.

Visualization with Open3d

The Open3d library contains Python bindings for a variety of tools for working with point cloud

data. Loading data into Open3d is just a matter of reshaping the numpy representation of a point

cloud, as demonstrated in the examples.pcap.pcap_3d_one_scan() example:

1# compute point cloud using core.SensorInfo and core.LidarScan

2xyz = core.XYZLut(metadata)(scan)

Once you have the xyz numpy array, you can create an Open3d point cloud and visualize it as follows:

1# create point cloud and coordinate axes geometries

2cloud = o3d.geometry.PointCloud(

3 o3d.utility.Vector3dVector(xyz.reshape((-1, 3)))) # type: ignore

4axes = o3d.geometry.TriangleMesh.create_coordinate_frame(

5 1.0) # type: ignore

6

7# initialize visualizer and rendering options

8vis = o3d.visualization.Visualizer() # type: ignore

9

10vis.create_window()

11vis.add_geometry(cloud)

12vis.add_geometry(axes)

13ropt = vis.get_render_option()

14ropt.point_size = 1.0

15ropt.background_color = np.asarray([0, 0, 0])

16

17# initialize camera settings

18ctr = vis.get_view_control()

19ctr.set_zoom(0.1)

20ctr.set_lookat([0, 0, 0])

21ctr.set_up([1, 0, 0])

22

23# run visualizer main loop

24print("Press Q or Escape to exit")

25vis.run()

26vis.destroy_window()

27source.close()

The examples.open3d module contains a more fully-featured visualizer built using the Open3d

library, which can be used to replay pcap files or visualize a running sensor. The bulk of the

visualizer is implemented in the examples.open3d.viewer_3d() function.

Note

You’ll have to install the Open3d package from PyPI to run this example.

As an example, you can view frame 84 from the sample data by running the following command:

$ python3 -m ouster.sdk.examples.open3d_example \

--pcap $SAMPLE_DATA_PCAP_PATH --start 84 --pause

PS > py -3 -m ouster.sdk.examples.open3d_example ^

--pcap $SAMPLE_DATA_PCAP_PATH --start 84 --pause

You may also want to try the --sensor option to display the output of a running sensor. Use the

-h flag to see a full list of command line options and flags.

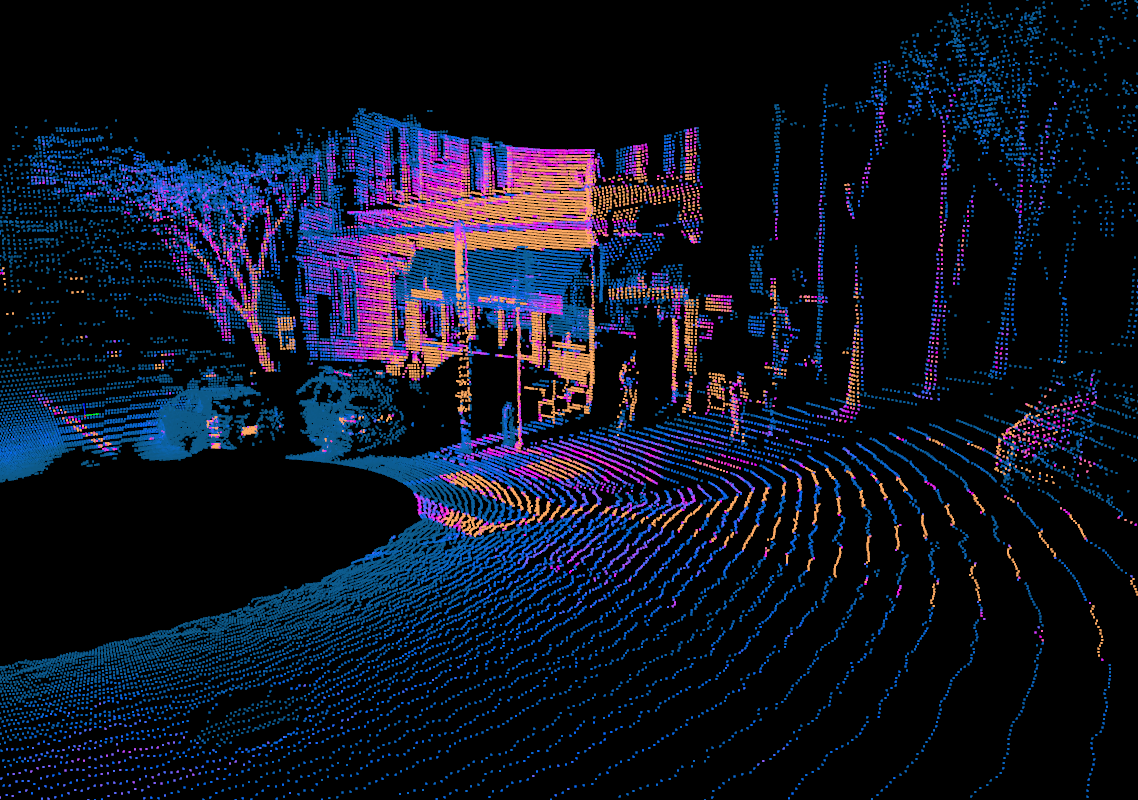

Running the example above should open a window displaying a scene from a city intersection, reproduced below:

Open3D visualization of OS1 sample data (frame 84). Points colored by SIGNAL field.

You should be able to click and drag the mouse to look around. You can zoom in and out using the mouse wheel, and hold control or shift while dragging to pan and roll, respectively.

Hitting the spacebar will start playing back the rest of the pcap in real time. Note that reasonable performance for real-time playback requires relatively fast hardware, since Open3d runs all rendering and processing in a single thread.

All of the visualizer controls are listed in the table below:

Key |

What it does |

|---|---|

Mouse wheel |

Zoom in and out |

Left click + drag |

Tilt and rotate the camera |

Ctrl + left click + drag |

Pan the camera laterally |

Shift + left click + drag |

Roll the camera |

“+” / “-” |

Increase or decrease point sizes |

Spacebar |

Pause or resume playback |

“M” |

Cycle through channel fields used for visualization |

Right arrow key |

When reading a pcap, jump 10 frames forward |

Visualization with Matplotlib

You should have defined source using either a pcap file or UDP data streaming directly from a

sensor, please refer to Developer’s Quick Start with the Ouster Python SDK for introduction.

Note

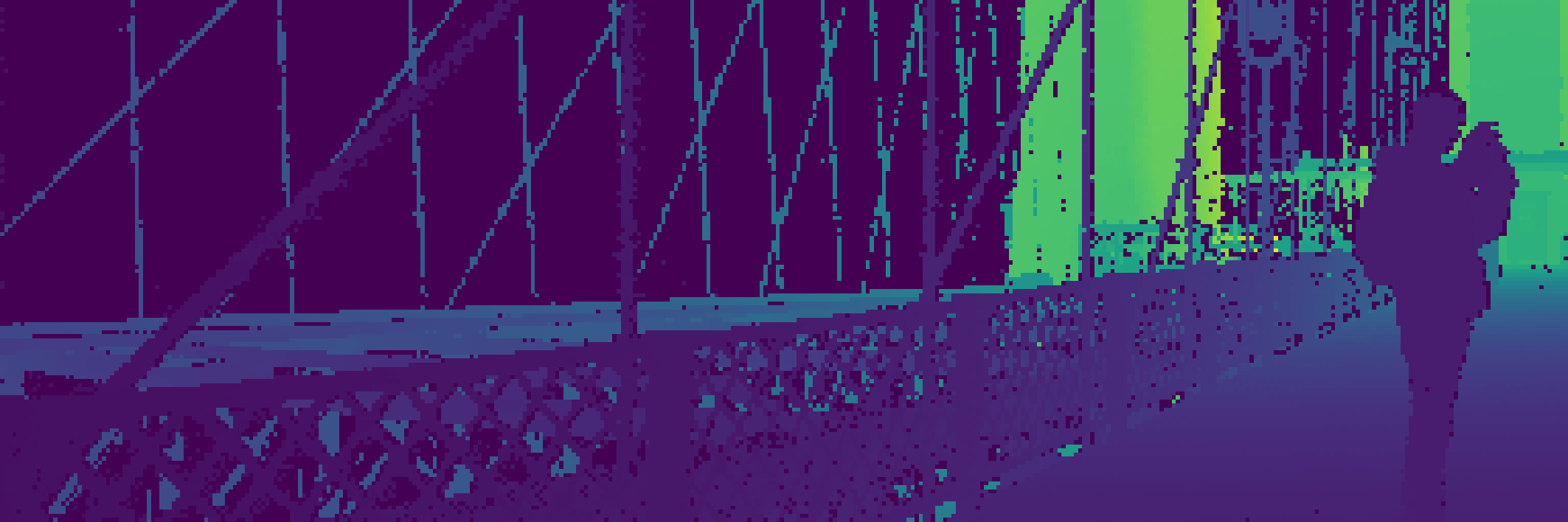

Below pictures were rendered using OS2 128 Rev 05 Bridge sample data.

Let’s read from source until we get to the 50th frame of data:

from contextlib import closing

from more_itertools import nth

import ouster.sdk.core as core

with closing(core.Scans(source)) as scans:

scan = nth(scans, 50)

Note

If you’re using a sensor and it takes a few seconds, don’t be alarmed! It has to get to the 50th frame of data, which would be 5.0 seconds for a sensor running in 1024x10 mode.

We can extract the range measurements from the frame of data stored in the core.LidarScan

datatype and plot a range image where each column corresponds to a single azimuth angle:

range_field = scan[0].field(core.ChanField.RANGE)

range_img = core.destagger(sensor_info, range_field)

We can plot the results using standard Python tools that work with numpy data types. Here, we extract a column segment of the range data and display the result:

import matplotlib.pyplot as plt

plt.imshow(range_img[:, 640:1024], resample=False)

plt.axis('off')

plt.show()

Note

If running plt.show gives you an error about your Matplotlib backend, you will need a GUI

backend such as TkAgg or Qt5Agg in order to visualize your data with matplotlib.

Range image of OS2 bridge data. Data taken at Brooklyn Bridge, NYC.

In addition to viewing the data in 2D, we can also plot the results in 3D by projecting the range measurements into Cartesian coordinates. To do this, we first create a lookup table, then use it to produce X, Y, Z coordinates from our scan data with shape (H x W x 3):

xyzlut = core.XYZLut(sensor_info)

xyz = xyzlut(scan[0])

Now we rearrange the resulting numpy array into a shape that’s suitable for plotting:

import numpy as np

[x, y, z] = [c.flatten() for c in np.dsplit(xyz, 3)]

ax = plt.axes(projection='3d')

r = 10

ax.set_xlim3d([-r, r])

ax.set_ylim3d([-r, r])

ax.set_zlim3d([-r/2, r/2])

plt.axis('off')

z_col = np.minimum(np.absolute(z), 5)

ax.scatter(x, y, z, c=z_col, s=0.2)

plt.show()

Point cloud from OS2 bridge data with colormap on z. Data taken at Brooklyn Bridge, NYC.

You should be able to rotate the resulting scene to view it from different angles.

To learn more about manipulating lidar data, see:

Set coloring mode or image mode using LidarScanViz API

From 0.15.0, you can now set an image mode or coloring mode using visualization api.

from ouster.sdk import viz, open_source

import sys

src = open_source(sys.argv[1])

sviz = None

def iter():

global sviz

for scan in src:

sviz._scan_viz.update(scan)

break

sviz._scan_viz.select_cloud_mode("RANGE")

sviz._scan_viz.select_img_mode(0, "RANGE")

for scan in src:

yield scan

sviz = viz.SimpleViz(src.sensor_info)

sviz.run(iter())